O P I N I O N

The future is now

The future is now

Back in 2015, I wrote about Artificial Intelligence and how it would revolutionize some industries and make our lives easier…and better. Fast forward eight years (I’m still trying to wrap my head around the fact that I wrote the article eight years ago…has it really been that long?). While I am cautious about the rapid development of AI and am closely watching as parameters and rules of use are placed upon its development and use, I am on board with the fact that AI can lead to greater efficiency, especially in the workplace. In a time when there is a historical labor force shortage, it is important – even vital – for companies to work with what they have. I am not looking to “phase people out of a job” but rather to make jobs more efficient so that if an organization does not have staff it can still deliver on its promises. (Plus, some roles require a human presence and cannot be replaced by AI, and there should be humans present to ensure that the artificial intelligence is doing things correctly).

Several types of AI

With the rapid development and all the talk surrounding Artificial Intelligence (AI), I wanted to write about the basics. I am still learning and am not a data scientist, but my hope is that I leave you with at least a basic understanding of AI, its history, and the desire to learn more. Because like it or not, AI is here and is already affecting our everyday lives.

AI is broken down into categories based on how well it “thinks” or remembers things and the explanations can get pretty scientific, but for the sake of this article, I will simply list the seven types of AI:

- Reactive Machines

- Limited Memory

- Theory of Mind

- Self-aware

- Artificial Narrow Intelligence

- Artificial General Intelligence

- Artificial Super Intelligence

The concept of AI is not new

The concept of artificial intelligence (AI) and the desire to create intelligent machines started back in ancient times when myths and tales often featured robotic-like humans and mechanical beings. While these early ideas were more rooted in mythology and folklore than in actual technological development, they provided a fascinating glimpse into the human imagination and early musings about the possibility of artificial life.

The mid-20th Century saw the growth of Artificial Intelligence because of the advent of electronic computers. Through the work of people such as mathematician Alan Turing, who gained notoriety by breaking the German Enigma code, the groundwork for the further development of computers and artificial intelligence was laid. In addition to breaking the Enigma Code, Turing developed what is known as the Turing Test. First proposed in 1950, the Turing Test is used to evaluate a machine’s ability to exhibit intelligent behavior indistinguishable from that of a human.

The Dartmouth Conference

Another milestone in the development of Artificial Intelligence was the Dartmouth Conference of 1956. Organized by John McCarthy, Claude Shannon, and others, the goal of this gathering was to explore the possibility of creating machines with human-like intelligence. This event is often regarded as the official birth of artificial intelligence. During the conference, McCarthy introduced the term “artificial intelligence” to describe the goal of creating machines that could perform tasks that would typically require human intelligence.

“AI Winter” and Resurgence

Despite early successes, the field of AI faced challenges that led to a period known as “AI winter” during the 1970s. Funding and interest in AI research declined as early expectations weren’t met, and the limitations of existing technology became apparent. Although AI looked to be dying, behind-the-scenes work continued and significant advancements in AI were made during the 1980s.

Continued advancements in the development of AI

The late 20th century and early 21st century have witnessed a paradigm shift in AI, with a growing focus on machine learning and data-driven approaches. Advances in computer technology, such as memory, power, and the ability to access more information have accelerated the growth of machine learning AI.

Machine learning has proved highly effective in various applications, from speech recognition and image classification to recommendation systems and autonomous vehicles.

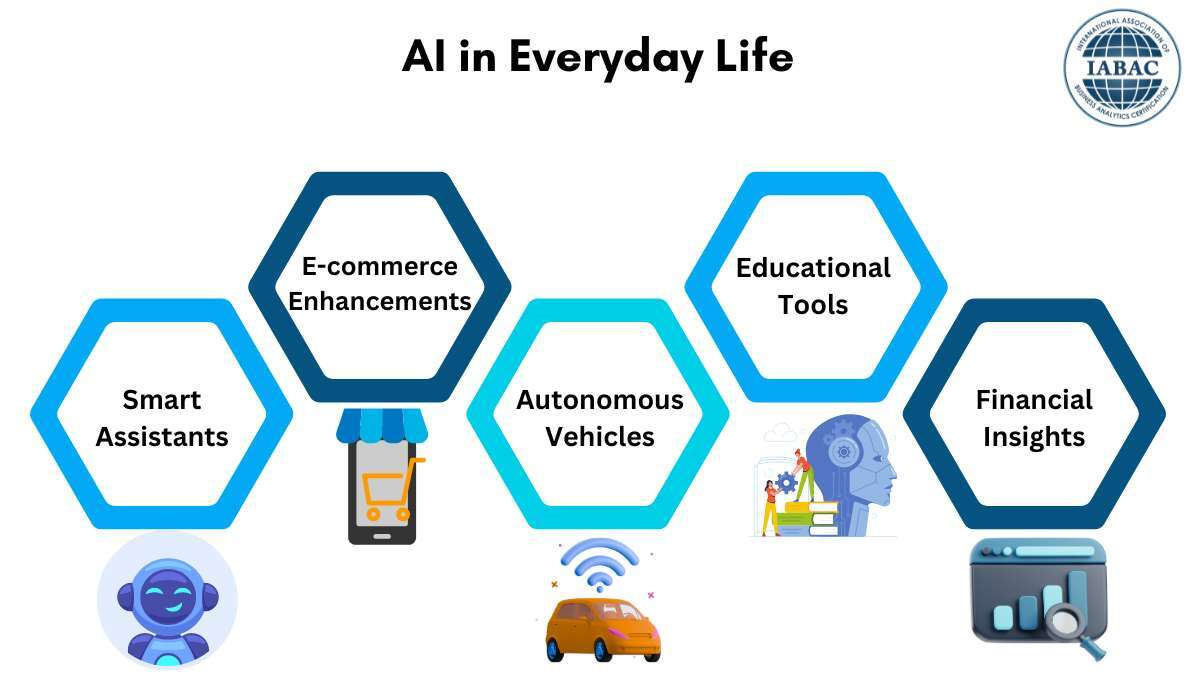

AI in Everyday Life

As AI continues to advance, it has become an integral part of our daily lives. Virtual assistants such as Alexa and Siri, powered by natural language processing and machine learning, entered households, simplifying tasks and enhancing lives. AI algorithms power recommendation systems on streaming platforms, e-commerce websites, and social media, shaping our preferences and influencing our decisions.

In healthcare, AI has made significant strides in diagnostic imaging, drug discovery, and personalized medicine. Autonomous vehicles, powered by AI, promise to revolutionize transportation, making roads safer and transportation more efficient.

Ethical Considerations and Future Challenges

While the evolution of AI has been awe-inspiring, it has also raised ethical concerns and challenges. Issues related to bias in AI algorithms, privacy concerns, and the potential impact on employment have sparked discussions about responsible AI development.

The future of AI holds both promise and challenges. As advancements in AI increase, ethical considerations and responsible development practices become even more critical. Discussions regarding oversight and limitations have been ongoing and are an important part of this rapidly advancing technology.

Conclusion

The history of AI is an incredible journey, from ancient myths and dreams of mechanical beings to the modern, cutting-edge technologies shaping our world today. The evolution of AI reflects the human quest for understanding and replicating intelligence, with each era building on the achievements of the previous one.

Although advancements in AI have been positive, and there is a universal understanding that we need to be cautious, aware of the ethical considerations as well as our limitations, and understand that AI is not meant to replace humans, there are people who do not believe that any aspect of AI is good or that it affects their life. In that case, here are a few points:

- If you use a home assistant such as Alexa, Siri, or Google, you are using AI.

- If you use Grammarly or any other writing assistant, you are using AI.

- If you search the internet using Google or Bing, you may be assisted by AI as Google and Microsoft have both added AI to their search engines.

A 2018 Northeastern University and Gallup survey found 85% of Americans use at least one of six products with AI elements – these products include navigation apps, ridesharing apps, digital assistants, video or music streaming services, smart home devices, and intelligent home personal assistants. (And that was almost six years ago).

Another fact. I used AI to assist me in writing this article. Why? To prove that AI is not all bad. I wrote into my AI Chatbot that I needed to research the history and developments of AI. It then wrote a 1200-word summary in maybe 35 seconds. Research that would have taken me hours took the chatboy 35 seconds. As a journalist – and me being me – I wouldn’t submit an article that I didn’t write, so I proofread, rewrote in “layman’s terms,” and made corrections. The chatbot inspired this article and led me to the proper research as well as gave me direction as far as what to write because there is so much that can be written about AI. It helped me focus. And I use Grammarly for all my articles as it helps me become a better writer.

So in my opinion, AI, as well as anything, can be used for both good and bad, which is why we need to continue to discuss limitations on its use. But as far as the current developments and helping with workplace efficiency, I think it has been good.

As always, if you have any comments feel free to drop me a message at bchicoinemht@gmail.com.